Kubernetes Cluster Autoscaler is an indispensable tool for managing resources effectively and maintaining cost-effective, high-performing clusters. However, it is important to note that Cluster Autoscaler is only aware of the pod resource requests, not their actual usage.

Background: The Importance of Cluster Autoscaler in Kubernetes

Kubernetes Cluster Autoscaler is a critical component in optimizing resource utilization and cost management within Kubernetes environments. By dynamically adjusting the number of nodes in a cluster based on resource demand, Cluster Autoscaler strikes the perfect balance between performance and cost efficiency, adapting to workload fluctuations and minimizing over-provisioning.

This is part of an extensive series of guides about DevOps.

Understanding How Kubernetes Cluster Autoscaler Works

Cluster Autoscaler operates using a control loop that periodically performs the following actions:

1. Fetching cluster state:

Cluster Autoscaler retrieves the current state of the Kubernetes cluster from the API server, including information about nodes, pods, and events.

2. Filtering nodes:

Cluster Autoscaler filters out nodes that shouldn’t be considered for scaling. For example:Nodes with annotation of “cluster-autoscaler.kubernetes.io/scale-down-disabled: “true”’, or nodes with running pods that contains the annotation of “cluster-autoscaler.kubernetes.io/safe-to-evict”: “false”.

3. Estimating resource requirements:

Using the retrieved data, Cluster Autoscaler estimates the resource requirements of unschedulable pods and makes scaling decisions accordingly.

4. Simulation-based scaling:

Cluster Autoscaler simulates adding or removing nodes to evaluate the impact on the Kubernetes cluster. This simulation helps Cluster Autoscaler determine the optimal number of nodes needed without violating constraints, such as min/max node counts or taints/tolerations.

Adding nodes:

For unschedulable pods, the Cluster Autoscaler runs a simulation to find the best suitable node to add to the cluster that can run the pending pods.

Removing nodes:

In order to drive efficiency and reduce compute costs, the Cluster Autoscaler attempts to run bin-packing by attempting to remove nodes (the filtered nodes from 2), while getting all pods to run.

5. Executing scaling decisions:

If the simulation shows a need to scale, Cluster Autoscaler sends the appropriate command to the cloud provider’s API to add or remove nodes.

8 Tips for Cost-Effective Configurations

1. Set minimum node group with care:

Configure the minimum node count for a node group to ensure adequate resources for your workloads while controlling costs.

2. Use multiple instance types and sizes:

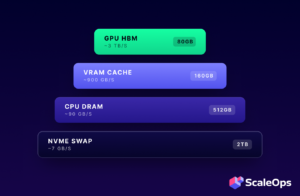

Allowing Cluster Autoscaler to choose from a wide variety of instance types and sizes ensures that your cluster will be able to meet the diverse resource requirements of your workloads. This way you can optimize costs based on the performance needs of each workload. For instance, some workloads might require high memory while others need more CPU power. Providing a mix of instance types enables the Cluster Autoscaler to select the most cost-effective option for each scenario, dramatically minimizes over-allocation of resources and reduces costs.

3. Leverage Spot Instances:

Integrate spot instances into your Cluster Autoscaler configuration to reduce costs while maintaining performance.

4. Use Expanders – least waste and price:

Expanders are algorithms that guide Cluster Autoscaler when choosing which node group to scale up. By configuring Cluster Autoscaler to use “least-waste” or “price” expanders, you can optimize your cluster’s resource allocation and control costs more effectively.

“least-waste” expander – selects the node group that results in the least amount of unused resources after scaling up. This minimizes resource wastage and ensures efficient utilization.

“price” expander (only works for GCP at the moment) – focuses on choosing the most cost-effective node group by comparing the price-to-resource ratios of available instance types.

5. Customize ‘skip-nodes-with-local-storage‘ flag:

By default, this flag is set to true, meaning Cluster Autoscaler will never delete nodes with pods with local storage, e.g. EmptyDir or HostPath. However, to reduce costs, you can set it to false, but be aware that the default value on AKS is already false. Note that setting this flag to false may pose a risk of potential data loss.

6. Set your pods with the right resource requests:

Optimized resource requests for your pods play a crucial role in cost optimization with Cluster Autoscaler. Cluster Autoscaler uses these resource requests to determine whether a node can accommodate additional pods or new nodes should be provisioned.

Underestimating resource requests can lead to overloaded nodes, causing performance degradation and possible pod evictions.

Overestimating resource requests, on the other hand, can result in underutilized nodes, increasing costs due to a large amount of idle resources.

By setting accurate resource requests, you can ensure that Cluster Autoscaler makes well-informed scaling decisions that minimize resource waste and reduces costs.

7. Assign “best-effort” priority class to select pods:

For workloads that can tolerate resource fluctuations and lower performance, assign a “best-effort” priority class to their pods. This allows the scheduler and the cluster autoscaler to prioritize more critical workloads while reducing costs associated with non-essential pods.

8. Set Pod Disruption Budgets (PDBs) to reduce costs with minimum impact on performance:

Pod Disruption Budgets (PDBs) are Kubernetes objects that specify the minimum number of replicas a Deployment or StatefulSet should maintain during voluntary disruptions, such as node scale-downs initiated by Cluster Autoscaler. By setting PDBs, you can ensure that Cluster Autoscaler does not remove too many nodes and causes an application to not have enough running pods at a certain time.

ScaleOps: Your Partner in Harnessing Kubernetes Cluster Autoscaler and Optimizing Resource Management

In conclusion, Kubernetes Cluster Autoscaler is an indispensable tool for managing resources effectively and maintaining cost-effective, high-performing clusters. However, it is important to note that Cluster Autoscaler is only aware of the pod resource requests, not their actual usage. This is where ScaleOps fills the gap by analyzing the real CPU and memory utilization of the pods, providing more accurate resource requests based on the actual consumption patterns.

By integrating ScaleOps with Cluster Autoscaler, you can achieve the optimal configuration that considers both the real resource usage of your pods and the dynamic scaling capabilities of Cluster Autoscaler. This powerful combination allows for improved cost efficiency and performance, ensuring your Kubernetes clusters are always running at their best.

With our free trial, in 2 minutes using a simple Helm installation, you will receive full visibility into your potential savings and current workload utilization.

See Additional Guides on Key DevOps Topics

Together with our content partners, we have authored in-depth guides on several other topics that can also be useful as you explore the world of DevOps.

Developer Experience

Authored by Octopus

Mainframe Modernization

Authored by Swimm

Kubernetes Autoscaling

Authored by ScaleOps